Are you aware of how to fix the Couldn’t Fetch Sitemap Error in Search Console? Sitemaps provide information to search engines about what pages are important and should be crawled.

There is no need for a sitemap for all websites, especially small websites with fewer than 100 URLs. Therefore, you should not create a sitemap for a small website.

The search engines will find all your pages more quickly if your home page is interconnected with all your most important pages. This article explains how you can fix the sitemap errors.

XML Sitemap Creation Important Rules to Follow

There are some essential rules in creating an XML sitemap that you should follow. These are as follows:

- Create a sitemap at the root of the website as a best practice

- Please ensure that a sitemap is created and submitted to the website’s preferred URL

- You should avoid non-canonical URLs, redirected URLs, or URLs with 404 status

- Relative URLs should be avoided in favor of absolute URLs

- If the sitemap is made within the 50MB limit, it should not contain more than 50,000 URLs.

- Robots.txt should not block the sitemap or any URLs contained within it.

- Make sure the sitemap is compatible with UTF 8.

- Submitting a sitemap to Google does not guarantee that the Google bot crawls all its URLs.

Submitting an XML sitemap to Google will assist the search engine in crawling your website’s URLs.

However, there is no guarantee that Google will crawl all the URLs listed in the sitemap or that Google will crawl the sitemap more frequently.

Therefore, by creating valuable content and updating the sitemap frequently, Google can crawl your sitemap more efficiently.

Is the sitemap accessible? How can this be verified?

It is imperative to check the validity of your sitemap before attempting to resolve the Couldn’t Fetch Sitemap Error on Search Console.

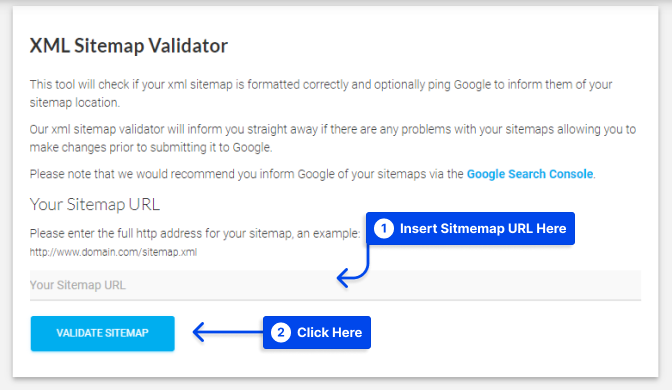

To perform this task, you may wish to use XML Sitemap Validator. It is a beautiful Google sitemap checker that will allow you to check whether any sitemap is valid. It will also provide information about the proper formatting for your sitemap.

Follow these steps to do this:

- You may access the XML Sitemap Validator

- You can check your sitemap by entering its address

Following these two simple steps, you can check if the sitemap is accessible and verified. This tool checks if your XML sitemap has been formatted correctly and can notify Google of its location.

By using this sitemap validation tool, you will be immediately notified of any errors in your sitemaps and therefore be able to correct them before submission to Google.

Couldn’t Fetch Google Search Console Error: How to resolve it?

There are several ways to resolve the annoying Google search console bug that prevents your sitemaps from being fetched. We present seven methods for resolving this issue in this section.

Method 1: Fix The Couldn’t Fetch Google Search Console Error

If you are experiencing a problem with your sitemaps not being fetched by the Google search console, this is a possible solution.

It typically works 50% of the time but has been reported to not be effective for some users. Follow the following steps to use this resolving method.

- Please log in to your Google Search Console account

- From the left panel/menu, select “Sitemaps.”

- Enter the URL of the sitemap that you wish to index in Add A Sitemap

- Please add a forward slash after the last forward slash in the URL and click the Submit button

- Repeat without the additional forward slash if the error persists

No need to worry; even with the extra forward slash, Google Search Console will index the correct domain name.

Method 2: Fix the couldn’t fetch Google Search Console error by renaming

To resolve the Couldn’t Fetch Error in Google Search Console if the sitemaps are valid but still do not work or are unable to be read, changing the name of the sitemap file may be the solution.

The file can be renamed by submitting the https://domain.com/?sitemap=1 instead of the sitemap_index.xml. It will perform the same function as renaming the sitemap file.

Method 3: Fix the sitemap error by checking the size of the sitemap.xml file

The recommended size of the uncompressed sitemap is 50 MB, containing up to 50,000 URLs. For larger websites, the sitemap index can assist in breaking down and compiling this size.

If possible, use a maximum limit instead of compiling sitemaps based on their indexes and making too small sitemaps.

When the maximum file size limit is exceeded, Google Search Console will raise an error message stating that the sitemap has exceeded the maximum file size limit.

Therefore, you should check the sitemap file size to avoid the Couldn’t Fetch Google Search Console Error.

Method 4: Ensure that Robots.txt does not block the Sitemap

The sitemap and all the URLs listed under it must be accessible by Google. If that access is blocked by the Robotstxt file, Google will display an error that states, “Sitemap contains URLs which are blocked by robots.txt.”

For instance, if you receive the following:

User-agent: *

Disallow: /sitemap.xml

Disallow: /folder/As you can see, the sitemap is blocked in this case, and all URLs within the /folder/ are blocked in Robots.txt. Every website contains a Robots.txt file located in the root directory.

Method 5: Make sure that the Sitemap file supports UTF-8

It is a standard feature of all automatically generated sitemaps to support UTF-8. You should ensure the sitemap file is UTF-8 compliant if you create it manually.

It cannot support URLs that contain special characters, such as * or . To support it, ensure that you follow the appropriate escape code.

For example, the following is a URL encoded in UTF-8, and the entity has escaped.

http://www.example.com/%C3%BCmlat.html&q=nameMethod 6: Put a Sitemap at the root of your website

If you wish to ensure that all URLs on your website are included in the sitemap and are crawled by Google, you should place the sitemap in the root folder of your site.

It is, for instance, not possible to place the sitemap like this:

https//www.betterstudio.com/folder/sitemap.xml, which will raise an error stating that “URL not allowed” and any URLs following the path after /folder are allowed but not https//www.betterstudio.com/folder/ or any higher level URL.

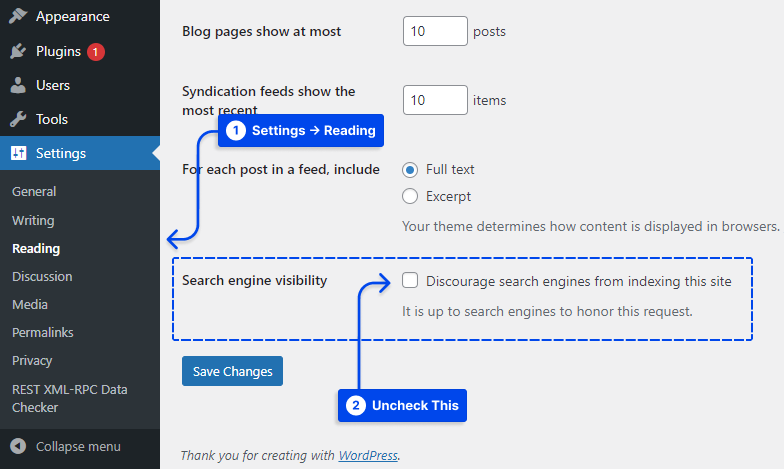

Method 7: Untick the Search Engine Visibility Option

A WordPress user should be aware of the basic settings. Within the settings section, there is one critical setting that you should untick: Discourage Search Engines From Indexing.

- Open your WordPress dashboard.

- Go to the Reading section in Settings.

- Finally, untick the Discourage Search Engines From Indexing section.

Once you have unticked search engine visibility, you need to resubmit your site map to Google Search Console.

Still not resolved?

Suppose you have attempted to follow most of the methods but still receive an Error Sitemap Couldn’t Fetch on your Google Search Console. In that case, it is necessary to do it manually.

We recommend you try the above methods first. If none of the above methods resolve your problem, consider using the manual method.

Using this method, you must manually create XML sitemaps for your website and upload them to the domain’s root directory. The sitemap should then be submitted to the Search Console.

Conclusion

These were the seven most effective ways to resolve the Sitemap.xml Couldn’t Fetch Error in Google Search Console.

This article aimed to assist you in solving your Couldn’t Fetch Sitemap Error, but if your sitemap could not be read after this, please contact us, and we will be sure to assist you.

We will be more than happy to answer any other questions you may have regarding blogging in the comments. Please share this article on social media if you enjoyed it. You can also follow us on Facebook and Twitter!